“What made you valuable five years ago might not matter next year.”

Adaptability is the skill that keeps every other engineering competency in this series current. Tools, workflows, and best practices turn over faster than documentation can track. The engineers who stay relevant treat learning as an operational discipline, not a personality trait. This final post covers how to build learning velocity, recognize when your mental models have expired, and create a team culture that absorbs change instead of resisting it.

The previous post on business acumen and ethical leadership argued that understanding business drivers turns you from a task-taker into someone who shapes what gets built, and that ethical judgment needs to show up in design docs and planning, not just post-incident reviews. That post was about what you bring to decisions. This one is about how you keep those contributions current when the ground keeps moving.

Roles evolve and workflows change with a single AI release. The half-life of tooling and workflow knowledge is shrinking: the CI/CD pipeline you mastered in 2023 may be outpaced by an AI-native alternative in 2026, and the prompting patterns that work today may not survive the next model generation. Foundational knowledge (distributed systems design, data modeling, security principles) remains stable and compounds over a career. But the layer on top of those foundations, the specific tools, libraries, and practices you use day to day, turns over faster than documentation can track.

What Got You Here Won't Keep You Here

For years, stability was a selling point. Master a language, go deep in a domain, specialize. That served engineers well when tools and workflows changed on the scale of years, not months.

That pace no longer holds for tooling. AI accelerates execution. Libraries, toolchains, and best practices shift faster than anyone predicted. The engineer who built their observability stack on Jaeger and OpenTracing in 2022 has likely had to migrate to OpenTelemetry by now, as the older APIs were deprecated and vendor support consolidated around the new standard. If you are solving problems with the same tools and the same workflow you used three years ago without questioning whether better options exist, that is inertia, not mastery.

Consider the raw speed: according to the Stanford HAI 2026 AI Index, generative AI reached 53% population adoption within three years — faster than the personal computer or the internet. That's not a trend you can “wait out.” By the time you've finished evaluating a new tool, half the world has already absorbed it into their workflow. The window between “emerging” and “table stakes” has collapsed. If your strategy is to adopt once things stabilize, you're already behind.

This does not mean deep expertise has lost its value. The opposite is true: an engineer with strong mental models for distributed tracing will learn OpenTelemetry faster than someone starting from scratch, precisely because the foundational concepts transfer. The risk is not depth itself. The risk is treating a specific implementation of that depth as permanent. Adaptability is what keeps deep knowledge compounding instead of depreciating. Without it, you go stale, regardless of how technically sharp you were last year.

Learning Velocity Multiplies the Value of Depth

Accumulated expertise still matters. Staff and principal engineers at many companies are promoted specifically because of the depth they have built over years. But learning velocity (the speed at which you go from encountering something new to using it effectively on real problems) is what determines whether that depth keeps compounding or starts to plateau. An engineer with ten years of systems experience who can pick up a new observability tool in a week gets more leverage from that depth than one who resists every tooling change.

Consider a concrete scenario. Your team adopts an AI code-review tool mid-sprint, and within a week it is flagging patterns your existing linter misses. One engineer on the team spends two hours reading the tool's documentation, runs it against recent PRs to understand its false-positive rate, and writes a short internal guide on which flags to trust and which to override. Another engineer ignores the tool entirely and keeps reviewing the way they always have. Within a month, the first engineer is the team's go-to resource for AI-assisted review, and their own review quality has improved because they calibrated their judgment against a new signal. No one told them to do this. They just moved faster through the learning curve.

That pattern (small and fast learning cycles applied to real work) is what learning velocity looks like in practice. It is not about chasing every new tool. It is about building a repeatable process for staying sharp:

- Small, fast learning cycles. Spend 30 minutes with a new tool against your actual codebase, not a toy project. Evaluate whether it changes how you work, then move on or go deeper.

- Stay connected to upstream context. Engineers who understand the product, the business model, and the user's pain points learn faster because they can evaluate new tools and techniques against real constraints, not abstract benchmarks.

- Reflect before grinding. When you are stuck, pause and ask whether you are approaching the problem with an outdated mental model before you spend another hour brute-forcing it.

Learning velocity is your most durable advantage in an industry where the tooling layer reinvents itself constantly, even as the foundations underneath it hold steady.

Here's what that looks like in practice: the Stanford HAI 2026 AI Index found that on SWE-bench Verified — a benchmark that tests AI on real-world software engineering tasks — performance jumped from 60% to near 100% in one year. One year. The tool that was “interesting but unreliable” last quarter is now solving the same problems you solve, at scale. Your ability to learn and reinvent faster than that improvement curve isn't a career advantage — it's a survival skill. Standing still doesn't mean falling behind gradually. It means becoming obsolete in a release cycle.

Your Team Culture Is Your Learning System

The scale of this shift is no longer hypothetical. The Stanford HAI 2026 AI Index reports that organizational AI adoption has reached 88%. Nearly nine out of ten engineering workplaces are already integrating AI into their operations. Adaptability is no longer a trait that sets you apart on a performance review — it's a baseline expectation in almost every team you'll join. The question isn't whether your organization will change. It's whether your team has the culture to absorb that change without breaking.

Learning is not just individual. It is systemic. A team where people hoard knowledge or avoid asking questions will adopt new tools slowly and unevenly, regardless of how talented the individual engineers are.

Here is what a team with strong collective learning habits looks like in practice. A backend team at a mid-size SaaS company decides to integrate an AI-assisted testing tool. Instead of assigning one person to “figure it out,” they run a structured 90-minute experiment: each engineer generates tests for a module they own, then the team reconvenes to compare results, flag false positives, and decide which parts of the workflow to keep. The session produces a shared understanding of the tool's strengths and limits that would have taken weeks to develop through individual trial and error.

That kind of structured experimentation is built on a few specific norms:

- It is safe to say “I don't know.” Engineers who admit gaps early get help faster. Teams that punish uncertainty get silence, followed by expensive mistakes.

- Code reviews prioritize clarity, not just correctness. A review comment that says “I don't understand why this approach was chosen” is more valuable than one that says “LGTM.” Clarity in reviews teaches the whole team, not just the author.

- Time is carved out for demos, feedback, and exploration. If learning only happens in leftover time after sprint commitments, it won't happen at all.

- Curiosity is modeled by leads. When a tech lead openly experiments with a new tool in a team channel and shares what they found (including what didn't work), it gives everyone permission to do the same.

These norms don't require management buy-in to get started. If you are a mid-level or senior IC without formal authority, you can still shift the culture from where you sit. Run a 30-minute experiment with a new tool and post a brief write-up in your team channel, covering what worked, what didn't, and whether you'd recommend it. Propose a 15-minute “learning spotlight” as an addition to your next retrospective. Pair with a colleague on a task that uses a tool neither of you has tried. When you ask a genuine question in a code review (“I don't understand this pattern, can you walk me through it?”), you make it safer for everyone else to ask questions too. Culture change at the team level often starts with one person doing the thing they wish were normal and making the results visible.

Teams that treat learning as a side project will stagnate together. Teams that build learning into how they operate will absorb change faster than any individual could alone.

The Learning Loop in Practice

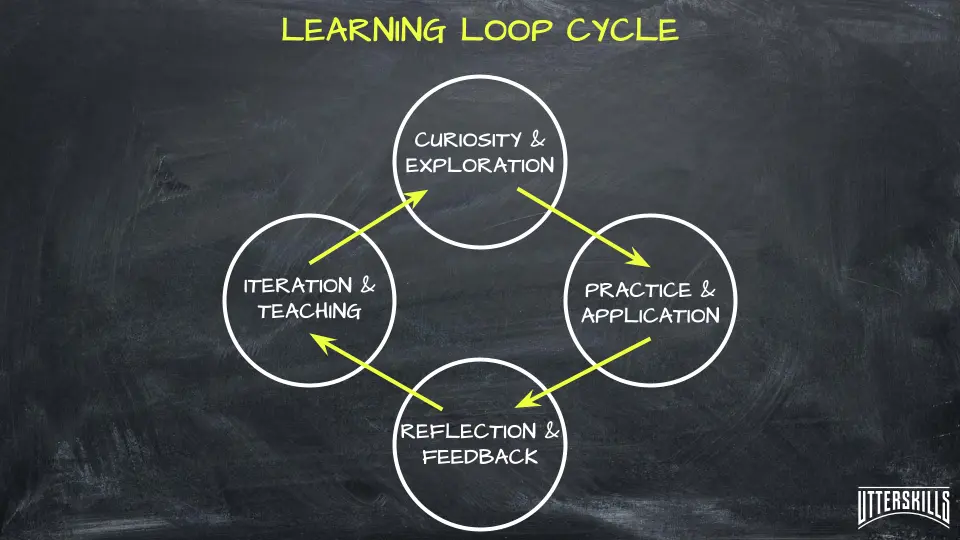

Adaptability runs on a cycle: curiosity, action, reflection, then sharing what you learned with the team. The sharing step is what separates individual learning from team learning. Here is what the full cycle looks like when it plays out on a real team.

True adaptability runs on a repeatable cycle: curiosity sparks investigation, action tests ideas against real work, reflection captures what worked and what didn't, and sharing turns individual experiments into team capability.

An engineer on a platform team notices that deploy rollbacks have spiked over the past two weeks, from one or two a month to five. She spends an hour reading about canary release patterns and progressive delivery, specifically how feature flags can gate rollouts to a subset of traffic. The following week, she tests a flag-gated rollout on a non-critical internal service: 5% of requests go to the new version, and she watches error rates for 30 minutes before widening to 50%, then 100%. The deploy succeeds cleanly. She writes up the process in a team wiki page covering what she tried, what worked, what the flag configuration looks like, and when this approach does and does not make sense. At the next team sync, she walks through the write-up. Two other engineers adopt the pattern the following sprint, and rollback frequency drops back to baseline within a month.

That is the full loop: curiosity (noticed the spike), action (read and tested), reflection (evaluated results and documented limits), sharing (wiki page and demo). Each step matters, but the last one is what turns a single experiment into a team capability. An engineer who tries the same canary strategy and keeps the results in their head has learned something. An engineer who writes it up and presents it has multiplied that learning across the group. The engineers who close the loop consistently are the ones whose teams adapt fastest.

Unlearning Is the Most Underrated Skill

Adapting is not only about adding new tools and techniques. It often means letting go of old ones.

This is where adaptability gets uncomfortable. The patterns that used to work, the stack you used to advocate for, the habits that once made you fast, can become the things that hold your team back. A senior engineer who insists on hand-rolling every integration because “that's how we ensure quality” may be blocking the team from adopting a well-tested library that would cut development time in half. Their instinct was right five years ago, when the library didn't exist or wasn't mature. It is wrong now.

Some of the hardest growth moments come from unlearning:

- That ownership means doing everything yourself. Delegation and trust are senior skills, not signs of weakness.

- That control beats collaboration. Tight control over implementation details works at a small scale. It creates bottlenecks at a larger one.

- That anything outside your comfort stack is less worthy. Dismissing unfamiliar tools without evaluation is a form of intellectual stagnation dressed up as standards.

Staying adaptive means staying honest about which of your convictions are based on current evidence and which are based on habit. Being willing to say “that used to be right, it isn't anymore” is one of the clearest signals of engineering maturity.

The Series in Full: What Skills Beyond Code Actually Means

This is the fifth and final post in the Skills Beyond Code in the Era of AI series. Across all five parts, the argument has been consistent: as AI handles more of the routine execution work, the skills that define a strong engineer are shifting toward judgment, communication, leadership, and adaptability.

Part 1 laid out the four competencies (critical thinking, communication, leadership, adaptability) and why CS programs still under-weight them. Part 2 introduced a four-question review process and a blast-radius heuristic for catching mistakes in AI-generated code. Part 3 explored prompt engineering as communication, translating AI output for stakeholders, and how empathy raises the floor of system quality. Part 4 made the case that business acumen is the judgment layer AI cannot replace, and that ethical leadership starts before the code.

This post closes the loop. Adaptability is what keeps all four of those competencies current. Critical thinking atrophies if you stop questioning your own assumptions. Communication skills lose their edge if you stop adapting to new audiences and tools. Leadership stalls if you cling to approaches that worked in a previous context. Business judgment becomes outdated if you stop learning how your industry and your users are changing.

The engineers who will have the strongest careers over the next decade are the ones who keep all five of these muscles in use: thinking clearly, communicating well, leading with judgment, understanding the business, and treating their own knowledge as something that needs regular maintenance. What made you valuable five years ago might not matter next year. The question is whether you will notice before someone else points it out.

Frequently Asked Questions

How is adaptability different from just “keeping up with new tools”?

Keeping up with tools is one piece of it, but adaptability is broader. It includes revising how you approach problems, how you collaborate, and which assumptions you carry into decisions. An engineer who adopts a new CI/CD platform but still scopes work the same way they did five years ago has updated their toolchain without updating their thinking. Real adaptability means your mental models evolve alongside your tools.

What can I do if my team doesn't prioritize learning?

Start small and make the results visible. Run a 30-minute experiment with a new tool and share a brief write-up of what you found. Propose a “learning review” as a 15-minute addition to your next retrospective. When one person consistently surfaces useful insights from small experiments, it tends to shift team norms faster than any top-down mandate. You don't need permission to learn in public.

Is “unlearning” really a skill, or is it just changing your mind?

Changing your mind is the outcome. Unlearning is the process: recognizing that a belief or habit you hold is based on outdated evidence, sitting with the discomfort of that realization, and actively choosing a different approach. Most engineers can change their mind when presented with proof. The harder skill is noticing when your current approach has stopped working before someone else has to point it out.

Author Quote

“What made you valuable five years ago might not matter next year.”