“You're not just coding with AI. You're working through it, and accountable for every word it writes.”

Modern software engineering no longer runs purely on syntax and logic. It runs on communication, and that communication now flows in two directions: human-to-human and human-to-AI. This post is for software engineers navigating that shift. It covers how prompt engineering is fundamentally a communication skill, how empathy raises the floor of system quality, and how team rituals like standups and design reviews need to evolve for AI-assisted workflows.

The previous post in this series covered how critical thinking turns code review into real engineering oversight: a four-question framework (assumptions, downstream consequences, goal alignment, and failure cost) and a blast-radius heuristic for catching mistakes in AI-generated code. That post focused on catching problems. This one is about preventing them, by making sure the right information reaches the right people (and the right models) before the code is even written.

In these hybrid workflows, engineers are more than builders. You are the interface, translating messy product intent into structured prompts, interpreting AI outputs, and aligning the results with human goals.

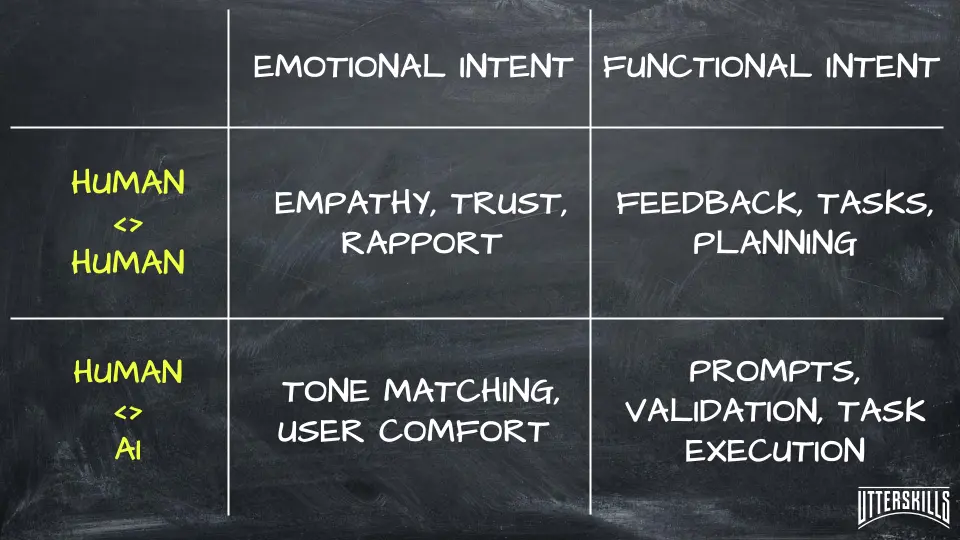

Communication skills vary by intent (emotional vs. functional) and target (human vs. AI). This quadrant maps the new communication demands engineers must master.

Prompt Engineering Is Communication

Prompt engineering is a communication skill. It requires the same clarity you would bring to an API spec, except your collaborator cannot ask follow-up questions.

AI does not know your system, your domain model, or your priorities. If you want reliable results, you have to specify exactly what is needed: goal, context, constraints.

Compare these two prompts:

Vague:

“Write a function for users.”

Specific:

“Write a Python function calculate_discounted_price(original_price, discount_percentage) that takes a float original_price and an integer discount_percentage (0–100) and returns the final price after applying the discount. Cap discounts over 100% at 100 and treat negatives as 0.”

The second prompt specifies input types, boundary behavior, and naming. It reflects the kind of clarity you would give a teammate who will never ask a follow-up question. The first leaves every decision to the model, and you will spend the saved keystrokes debugging whatever the model decides on its own.

Great prompt engineering comes from the same skills that make you a great reviewer or lead: the ability to articulate what matters and why. This is communication at a technical level.

When Syntax Is Clear but Context Is Missing

The calculate_discounted_price example shows how specificity improves results. But some prompt failures have nothing to do with vagueness. They happen when the prompt is syntactically precise and still omits the context the model needs.

Consider an engineer who asks the AI to generate a database migration: “Add a last_login_at timestamp column to the users table, nullable, default null, with an index.” That prompt is well-structured. It names the table, the column type, the constraints, and the indexing requirement. The AI generates a clean migration that works locally.

In production, it causes a split-brain conflict. The users table is replicated across three regions with eventual consistency, and the migration triggers a schema change that the replication layer cannot resolve atomically. The prompt never mentioned the replication topology because the engineer was thinking about the column, not the deployment environment.

The fix was not more precise syntax. It was communicating the system context the model had no way to infer on its own. This is the same principle behind Part 2's first review question, “what assumptions is this output making?”, applied at the prompting stage instead of the review stage. The engineers who write effective prompts are usually the same ones who write effective design docs. The skill transfers directly.

Translating AI Output for Stakeholders

AI tools generate solutions. You are often the one who has to explain them to others.

Maybe a PM is wondering why the AI's suggested data model does not match user needs. Maybe a non-technical stakeholder needs to understand why an AI-generated recommendation violates privacy policy. Maybe QA is asking why an auto-generated test suite skipped a high-risk edge case.

Each of those conversations requires mapping machine logic to human context: business goals, team culture, user expectations. The ability to bridge that gap is what ensures decisions are understood, aligned, and actionable.

Poor translation leads to missed priorities, rework, and eroded stakeholder trust. When the communication gap between engineering and product is wide, a PM without visibility into a technical trade-off will often defer to whatever sounds most confident, and that is frequently the AI's default suggestion. An engineer who can restate the trade-off in terms of customer risk and delivery timeline changes the conversation entirely.

Engineering earns strategic credibility by making output legible to the people who decide what gets built next.

How Empathy Shapes System Quality

Empathy helps you see around corners. It is how you anticipate how something will be used, or misused. It is the skill that lets you catch a tone mismatch in an AI-generated user-facing message, or sense that a junior developer does not fully trust the tool you just introduced.

That interpersonal dimension matters more than it looks on paper. Picture a design review where the AI has proposed a caching strategy and the team is moving to approve it. One engineer has been quiet the whole time. You could move on, or you could ask: “You look like you have a concern. What are you seeing?” It turns out they worked on a similar caching layer at a previous company and watched it fail under write-heavy load, exactly the pattern your system is approaching. That five-second question, driven by noticing a teammate's hesitation, surfaces a risk that no amount of prompt clarity would have caught.

Empathy also extends to how you think about users and the systems they depend on. Engineers who think about real user behavior, not just ideal behavior, build more resilient software:

- How would this fail in production, not just pass in tests?

- Is this solution intuitive for the person debugging it in six months?

- Are we designing for inclusion, accessibility, and clarity, or just happy-path speed?

Empathy raises the floor of code quality. It prevents technical debt by surfacing assumptions early. It improves user experience by catching mismatches between intent and delivery. In AI-heavy workflows, it is how you protect against building software that passes every test but fails every real user.

The four-question framework from Part 2 (assumptions, downstream consequences, goal alignment, failure cost) asks “what assumptions is this output making?” Empathy is what lets you answer that question from the user's perspective, not just the system's.

How AI Changes Engineering Team Collaboration

The old model was straightforward: break down the work, divide the tickets, ship the code. Now collaboration also means:

- Pairing with AI tools that are fast but context-free. Working with an AI assistant is like working with a contractor who delivers fast but has never seen your codebase, your on-call rotation, or last week's outage.

- Creating shared norms around which AI outputs to trust, override, or escalate.

- Managing different comfort levels with AI across the team. Some engineers lean on AI for everything. Others distrust it entirely. Both extremes create risk.

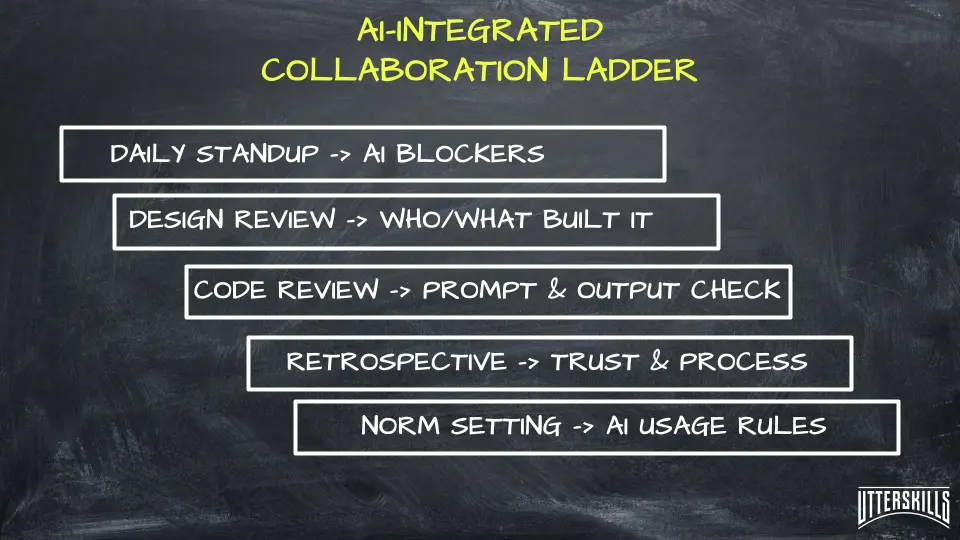

Your team rituals need to evolve. Not by adding new ceremonies, but by updating the ones you already have.

Standups

“I used Copilot for the auth handler, but I am not confident about the token refresh logic. I want a second pair of eyes.” That sentence takes five seconds and changes the quality of the review that follows.

Design Reviews

“The AI suggested this schema. Here is why I kept it, and here is the assumption I overrode.” Making AI contributions visible lets the team calibrate trust collectively instead of each engineer deciding alone.

Retrospectives

“We shipped an AI-generated migration without enough scrutiny. What signal did we miss, and how do we catch it next time?” Retros already exist to improve process. AI-related failures belong there.

Each of these small additions builds a shared vocabulary for how the team works with AI. Without that vocabulary, each engineer develops their own habits, and those habits diverge fast. One person rubber-stamps everything from the model. Another rewrites every suggestion from scratch. Neither approach is optimal, and neither is visible to the rest of the team unless you talk about it.

Each stage builds AI awareness into existing team rituals, from standups to retrospectives, creating a repeatable process for alignment, oversight, and trust.

Try This Week: The Three-Sentence Translation

Pick one technical decision from your current sprint that involves a non-technical stakeholder. Write a three-sentence summary:

- What you are doing (plain language, no jargon).

- Why it matters to the user or the business.

- What it costs (time, risk, or trade-off).

Example: “We are replacing the search indexer with a new implementation. The current one returns stale results for about 12% of queries, which drives support tickets. The migration will take the search offline for roughly 30 minutes during a low-traffic window.”

That is the kind of translation that turns an engineering update into a decision a PM or executive can actually act on. Practice it once this week. You will use it constantly once it becomes a habit.

Why You Are the Interface Between AI and Your Team

AI is a new collaborator, and you are its interface. Every time you prompt, review, explain, or align, you are doing the work that holds the system together. Clear communication and empathy function as collaboration protocols in a world where half your output is machine-assisted and all of it is still human-accountable.

The engineer who can prompt clearly, translate effectively, and collaborate across comfort levels is the one who keeps the team aligned. That is what makes you consistently valuable, and the reason your team needs you in the loop.

Frequently Asked Questions

Is prompt engineering a communication skill?

Yes. Prompt engineering requires the same clarity you would bring to an API spec or a design doc: defining the goal, providing context, and specifying constraints. The difference is that your collaborator cannot ask follow-up questions, so every ambiguity you leave in the prompt becomes a decision the model makes without you. Engineers who communicate well with people tend to write better prompts for the same reason.

How does empathy improve software quality?

Empathy lets you anticipate how real users will interact with a system, not just how ideal users would. It surfaces assumptions early (a tone mismatch in an AI-generated message, an accessibility gap, a workflow that breaks under edge-case input) and it helps you read teammates well enough to draw out concerns they have not voiced. Both of those catch problems that no test suite or linter will flag on its own.

How should engineering teams collaborate with AI tools?

Teams need shared norms for when to trust, override, or escalate AI-generated output. The most practical approach is updating rituals you already run: mention AI contributions in standups so reviewers know where to look, make AI-suggested trade-offs visible in design reviews, and include AI-related misses in retrospectives. Without that shared vocabulary, each engineer develops isolated habits that diverge fast and create blind spots.

Next in this series: Beyond the Feature: Business Acumen and Ethical Leadership explores how engineers who understand business drivers and ethical trade-offs help define what gets built in the first place.

Author Quote

“You're not just coding with AI. You're working through it, and accountable for every word it writes.”